BWFNet: 3D Building Reconstruction from Single Off-Nadir Remote Sensing Image with Semi-Weak Supervisions

Paper Code

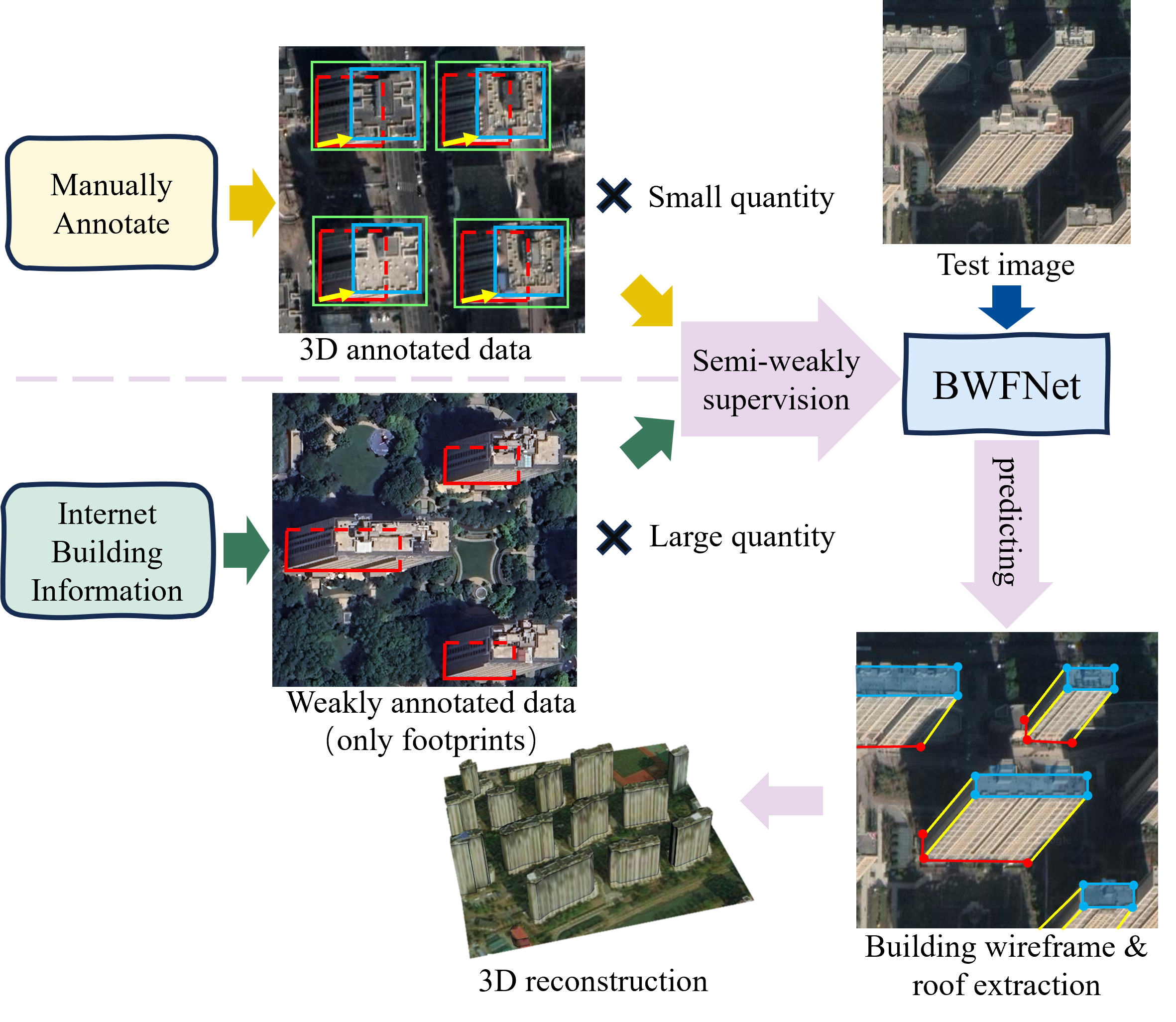

Rapid 3D building reconstruction in urban-scale areas has emerged as a pivotal technology for

smart city applications. Recent methods that reconstruct buildings from single off-nadir imagery

have gained attention due to their efficiency in both time and data costs. However, the training

of these methods relies on large-scale, costly 3D annotations, including building bounding

boxes, roofs, footprints, and roof-to-footprint offsets, and thus cannot be trained when only

the footprint is available, despite the fact that a large amount of building footprints can be

easily obtained in crowdsourced building data set form the Internet. To address this, we propose

a semi-weakly supervised learning method that leverages massive weakly annotated data

(footprints) and a limited number of manually annotated 3D building labels to learn to

reconstruct 3D buildings. In our method, we introduce an ingenious wireframe representation to

replace conventional bounding-box representation, thereby providing a foundation for semi-weakly

supervised learning. Based on this representation, we propose BWFNet for extracting building

wireframes. BWFNet enhances accuracy under semi-weakly supervision by modeling both structural

and local knowledge. Furthermore, we propose a training strategy for building wireframe

extraction grounded in the principle of geometric consistency constraints to further improve

weakly supervised performance. The experimental results demonstrate that the proposed BWFNet

achieves excellent reconstruction performance by utilizing only 3% fully annotated data combined

with weakly supervised samples. This performance represents a significant improvement compared

to current state-of-the-art methods.